Table of Contents

Key Takeaways

- Shift from multiple agents to a unified framework: Instead of building fragmented, task-specific agents, focus on developing a single agent with a thousand skills using a centralized, autonomous architecture.

- Prioritize auditability and ground truth: As systems become more complex and autonomous, maintaining clear audit trails and a reliable ground-truth dataset is essential for validating AI decisions.

- Embrace "human-in-the-loop" as a bridge: Start with suggestions and human verification before moving to full autonomy to build trust and ensure the system aligns with business policies.

- The cultural pivot is as important as the tech: Success in the AI era requires teams to move away from low-level "firefighting" tasks and focus on high-leverage product decisions and strategic problem-solving.

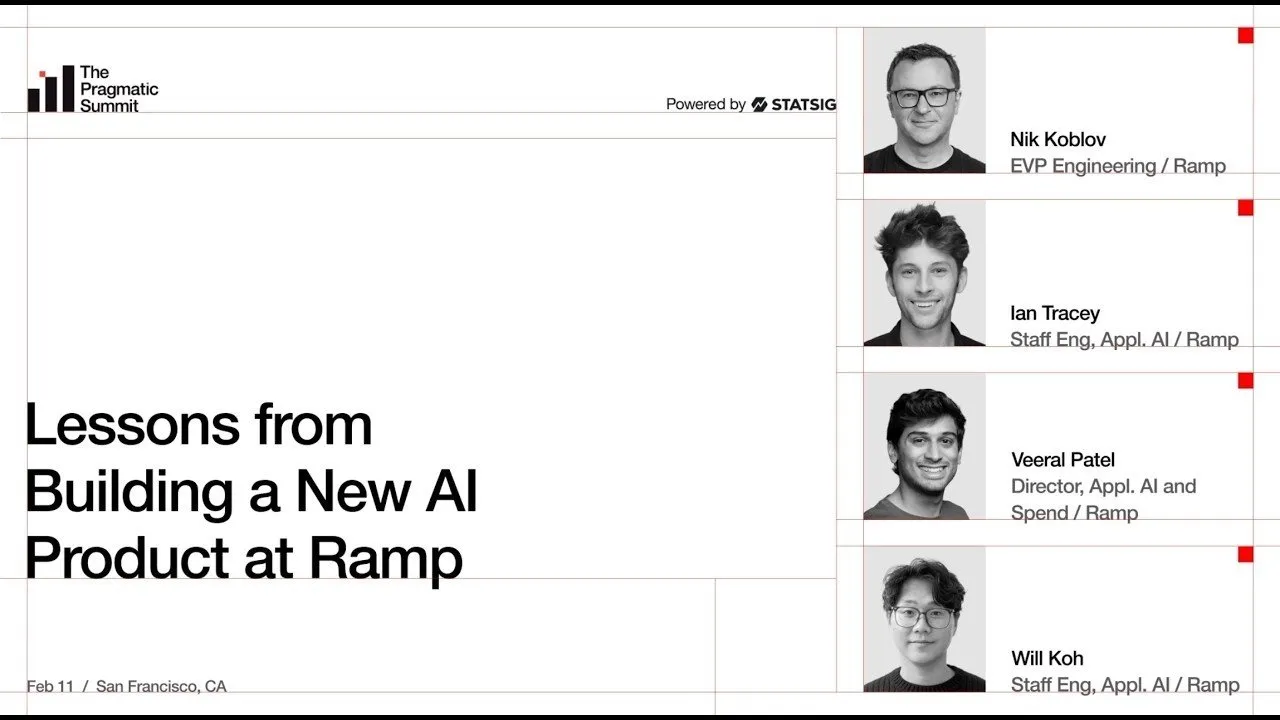

The Shift Toward Autonomous Financial Operations

Modern finance platforms are evolving from simple tools into autonomous systems capable of reasoning and acting on behalf of the user. At Ramp, the goal is to condense time and return money to businesses by automating manual workflows like expense classification, receipt sourcing, and GL coding. Initially, companies often attempt to solve these problems by building dozens of isolated, "one-shot" AI agents. However, this approach quickly leads to technical debt and fragmented user experiences.

The current paradigm shift in software demands a rethink of this stack. Rather than maintaining a zoo of disconnected agents, successful teams are moving toward a single agent with a thousand skills. By focusing on a unified conversational interface—like an "Omnichat"—products can become truly omnipresent, handling complex, multi-step workflows like employee onboarding or real-time expense policy enforcement without constant manual intervention.

Building Trust Through Intelligent Policy Agents

The "Policy Agent" serves as a primary example of how AI can transform back-office operations. By leveraging Large Language Models (LLMs) to reason over images and transaction data, the system can automatically approve or reject expenses based on company policy. This removes the burden from finance teams who would otherwise manually review thousands of receipts.

"English is the new programming language; turn the expense policy into the rules themselves."

Iterating Toward Reliability

AI products cannot be "one-shotted" to perfection. Success requires a commitment to iterative development, starting with constrained, low-risk problems—such as verifying simple coffee expenses—and slowly layering on complexity. As models improve and contextual data from HR systems is integrated, these agents begin to handle more nuanced decisions, such as determining if a specific employee’s role justifies higher travel limits.

The Infrastructure of Applied AI

Scaling AI internally requires a robust infrastructure that shields product teams from the rapid volatility of model providers. By centralizing the "Applied AI Surface," engineers can swap underlying models (e.g., transitioning from GPT to Opus) via a simple config change. This infrastructure must support three critical components:

- Structured Outputs: Ensuring consistent API and SDK responses across different model providers.

- Batch Processing: Handling large-scale data analysis and evals without overwhelming system resources.

- Cost Tracking: Identifying the Pareto curve of model performance versus cost, allowing teams to balance efficiency with capability.

The Role of Tool Catalogs

A major key to mitigating LLM hallucinations is the creation of a comprehensive tool catalog. By building a library of verified APIs—such as "get policy snippet" or "fetch recent transactions"—engineers provide the AI with a controlled environment for action. When the agent is restricted to using these verified tools, it becomes significantly more reliable than an agent relying solely on probabilistic generation.

Redefining Engineering Culture for the AI Era

Perhaps the most significant challenge in building an AI-native product is the cultural shift required for engineering teams. As AI takes over repetitive, low-level coding tasks, the value of a developer shifts from raw throughput to high-level judgment, context management, and architectural strategy.

"Coding was never really the hardest part of a lot of jobs for a long time. There's all these other engineering principles that become really important."

There is a stark difference between teams that "vibe code" without understanding the underlying business problem and those that obsess over user experience and long-term impact. The most successful teams will use the capacity freed up by AI not to do less work, but to pursue opportunities that were previously too expensive or complex to touch. This is the era of the perpetual build, where software is never "finished" and the focus shifts to delivering continuous, high-impact value to the customer.

Conclusion

Building for an AI-native future requires a departure from the traditional, fragmented approach to software development. By focusing on unified frameworks, rigorous evaluation, and a culture that prioritizes strategic judgment over manual tasks, organizations can unlock unprecedented levels of efficiency. As we look toward the next decade, the companies that succeed will be those that effectively leverage AI to solve complex, high-value problems while maintaining the human oversight necessary to ensure trust and accuracy.