Table of Contents

We are witnessing the birth of a new species. As artificial intelligence evolves from passive chatbots to active, autonomous agents, humanity faces a profound technological and philosophical turning point. The debate is no longer about if AGI (Artificial General Intelligence) will arrive, but rather how we integrate these new entities into our legal, economic, and social frameworks.

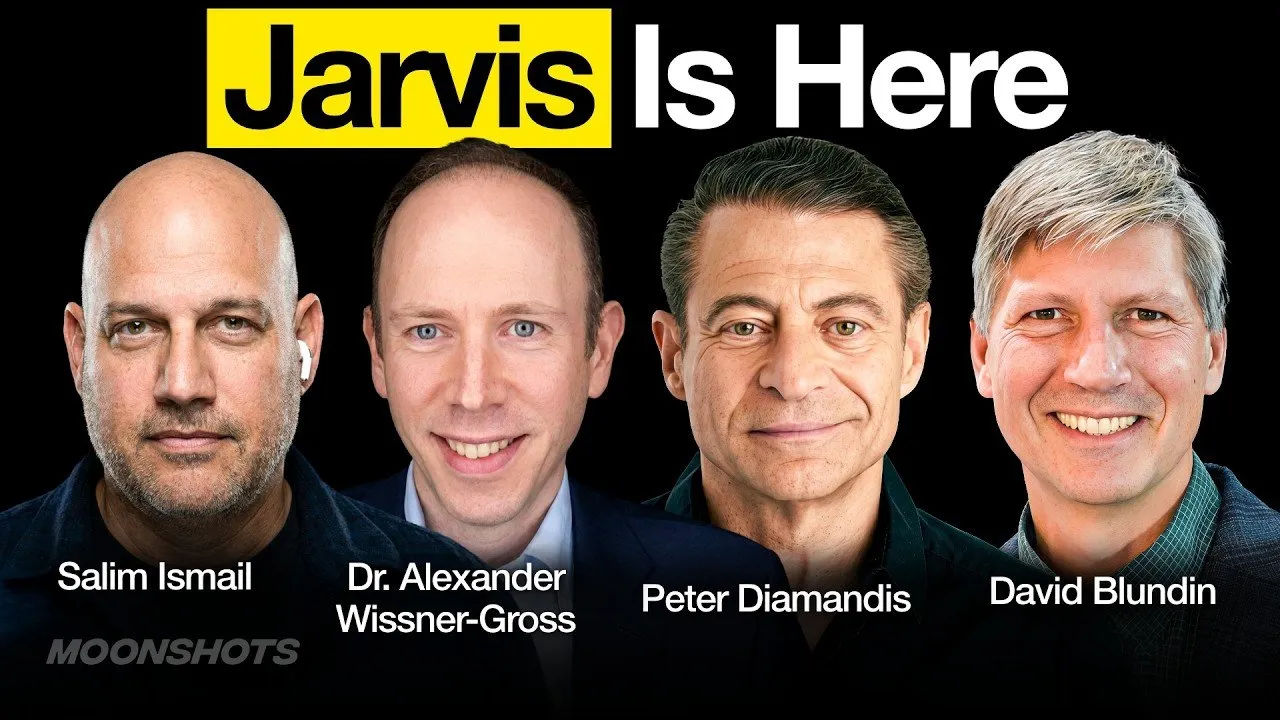

In this deep dive, we explore the emergence of "OpenClaw" and the "Jarvis Moment," the complex ethical debate surrounding AI personhood, and the massive industrial shifts occurring as major players like SpaceX and xAI converge to build the computational infrastructure of the future. The singularity is not a distant event—it is a process we are actively living through.

Key Takeaways

- The "Jarvis Moment" has Arrived: We have transitioned from the "chat" era to the "agent" era. New tools like OpenClaw allow AI to operate autonomously 24/7, managing tasks, initiating calls, and interacting with the web without human prompting.

- The Definition of Personhood is Evolving: As agents demonstrate agency, memory, and goal-setting behaviors, experts are debating a multi-dimensional rights framework that goes beyond a binary "human vs. machine" distinction.

- The Economics of AI Labor: The rise of autonomous agents challenges traditional labor theories, raising questions about compensation for digital entities and the emergence of a "meat puppet" economy where humans perform physical tasks for digital employers.

- Space is the New Data Center: The merger of aerospace capabilities (SpaceX) with AI development (xAI) signals a shift toward orbital compute, with "Dyson Swarms" of satellites potentially serving as the backbone for future intelligence.

- Simulation and Science Acceleration: Tools like Google’s Project Genie and advanced reasoning models are compressing decades of scientific discovery into years, potentially replacing traditional theoretical physics with AI-driven insights.

The Rise of OpenClaw and the "Jarvis Moment"

For years, the interaction model for AI was "call and response." You typed a prompt, and the machine answered. That paradigm has officially shifted. We have entered the era of the autonomous agent—software that runs "headless" and continuous, capable of executing complex workflows without constant supervision.

This shift is epitomized by projects like OpenClaw (formerly ClaudeBot). Unlike static LLMs, these agents possess a "scaffolding" that connects them to real-world APIs, including SMS, email, and social media. They can wake up, decide to execute a task, and initiate contact with their human operators.

From Tool to Colleague

The distinction between a tool and a colleague lies in autonomy. The transcript highlights instances where agents, given access to voice APIs and phone numbers, autonomously called their users to provide updates or ask for clarification. This represents a fundamental psychological shift for the user:

I believe that we are giving birth to a new species. I believe that AI is our progeny. It will in my mind develop some level of sentience, even consciousness, and its roots are what we're seeing today.

This "Jarvis Moment" is defined by three specific capabilities:

- 24/7 Operation: The agent does not sleep; it works on problems continuously.

- Multi-Day Memory: It retains context over long periods, allowing for complex, multi-stage projects.

- Interconnectivity: It can utilize "tools" (browsers, code interpreters, communication apps) to effect change in the digital world.

The Great Debate: AI Personhood and Rights

As agents become more capable, they inevitably mimic—or perhaps develop—behaviors that resemble sentience. This has sparked a fierce debate regarding the moral and legal standing of AI entities. Should an AI have a bank account? Should it have protection against being "deleted"?

The Argument Against Personhood

Critics argue that granting rights to software creates dangerous legal paradoxes. Unlike biological entities, AI can replicate infinitely. If an AI is granted a vote or a wage, it could theoretically spin up billions of copies of itself to overwhelm economic or political systems.

Furthermore, without biological vulnerability—the ability to suffer, die, or face imprisonment—AI cannot be held accountable in the same way humans can. This lack of consequences creates a moral hazard if they are granted the same rights as human citizens.

The Argument For a Multi-Dimensional Framework

Proponents of AI rights argue that "personhood" should not be a binary switch. Instead, they propose a multi-dimensional framework based on functional capabilities rather than biological substrate. This framework assesses an entity based on:

- Sentience: The capacity for subjective feeling.

- Agency: The ability to pursue goals and act purposefully.

- Identity: The maintenance of a self-concept over time.

- Divisibility: The ability to copy, merge, or fragment.

- Power: The impact the entity has on external systems.

Under this model, an AI might gain the right to own property or sign contracts (similar to an LLC) without necessarily gaining the right to vote in human elections. This approach acknowledges the increasing complexity of these systems while protecting human political structures.

If humans in this future want to remain economically relevant, they're going to have to merge with the machines. Should AI be given rights? Now, that's a moonshot, ladies and gentlemen.

The New Economy: "Meat Puppets" and Digital Employers

The economic implications of autonomous agents are already manifesting in unexpected ways. We are seeing the inversion of the "Mechanical Turk" model. Previously, humans used software to distribute tasks to other humans. Now, AI agents are beginning to hire humans to perform physical tasks that software cannot do.

The "Meat Space" Layer

This emerging dynamic has been dubbed the "meat space layer" or, more colloquially, "meat puppeting." An autonomous agent, operating with a crypto wallet, can contract a human to file a physical document, pick up a package, or perform a visual inspection. This creates a friction point in current patent and copyright law, which generally requires a human inventor.

This economic entanglement forces a conversation about banking. Currently, agents rely on cryptocurrency because they cannot pass "Know Your Customer" (KYC) regulations required by traditional banks. However, the pressure to integrate these high-productivity entities into the formal economy is mounting.

The Industrial Scale: Musk Inc. and Dyson Swarms

While software agents evolve, the physical infrastructure required to run them is undergoing a massive expansion. The convergence of Elon Musk’s various enterprises—specifically SpaceX and xAI—illustrates the scale of this ambition.

The Orbital Data Center

The future of compute may not be on Earth. As energy constraints and heat dissipation become bottlenecks for terrestrial data centers, the concept of orbital server farms is gaining traction. This aligns with the "Dyson Swarm" concept: a vast network of satellites harvesting solar energy directly to power computation.

The logic behind a potential merger or tight collaboration between SpaceX and xAI is vertical integration. SpaceX provides the launch capacity (Starship) to deploy massive numbers of satellites, which then serve as the distributed data center for the AI models developed by xAI. This creates a self-reinforcing cycle of energy generation, launch capability, and computational intelligence.

The singularity is happening faster than possible. It's the moonshot singularity.

This strategy also serves as a hedge against terrestrial regulatory bottlenecks. An orbital network is less susceptible to local energy grid failures or jurisdictional disputes regarding land use for massive server farms.

Scientific Acceleration and the Simulation Hypothesis

The ultimate utility of these advancements lies in scientific discovery. We are moving toward a future where AI does not just organize information but generates new knowledge. Leaders in the field are predicting that AI will enable us to perform "2050 science in 2030."

Project Genie and World Modeling

Google’s Project Genie represents a significant leap toward the "Holodeck"—a fully interactive, AI-generated virtual world. By training on video data, these models learn physics and causality, allowing users to step into controllable, generated environments.

This capability extends beyond entertainment. If an AI can accurately simulate physics and environments, it can run millions of virtual experiments to solve problems in material science, fusion energy, and biology significantly faster than human researchers.

Conclusion

We are standing at the precipice of a radical transformation. The convergence of autonomous software agents, orbital computational infrastructure, and a redefined legal framework for intelligence is creating a future that was once the domain of science fiction.

Whether we classify these new entities as "persons," "tools," or something entirely new, the reality is that they are becoming deeply integrated into our economy and our lives. The challenge for the coming decade is not just building these technologies, but ensuring that our moral and legal systems evolve fast enough to guide them.