Table of Contents

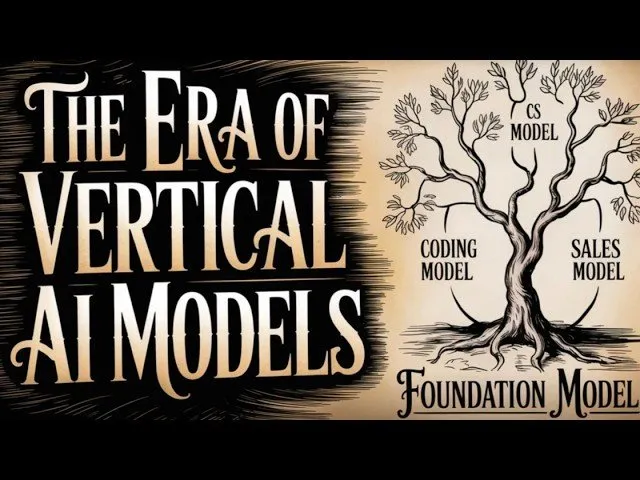

The artificial intelligence landscape is shifting as enterprise leaders increasingly pivot from general-purpose foundation models to highly specialized, domain-specific AI. Recent announcements from companies like Intercom and Cursor signal a move toward "vertical AI," where businesses leverage proprietary "last-mile" usage data to fine-tune open-source base models, effectively outperforming industry-standard frontier models in speed, cost, and accuracy.

Key Points

- Specialized Performance: Companies are increasingly training domain-specific models that outperform general-purpose frontier models on tasks like customer service and code generation.

- The Post-Training Shift: Rather than building from scratch, firms are taking existing open-weights models and applying intensive reinforcement learning based on proprietary interaction data.

- Economic Disruption: This trend threatens the "API tax" model used by major AI labs, as businesses realize they can achieve superior results at a fraction of the cost by moving workflows in-house.

- The Experience Advantage: This evolution aligns with the "Bitter Lesson"—the idea that systems leveraging massive, raw experiential data consistently beat those reliant on curated human knowledge.

The Shift Toward Vertical Integration

For years, the industry consensus was dictated by the "Bitter Lesson," a 2019 essay by researcher Rich Sutton, which posited that general methods leveraging brute-force computation will always eventually supersede systems built on human-designed shortcuts. While early attempts at specialized models—such as BloombergGPT—struggled to keep pace with general foundation models, the current wave of "vertical" AI utilizes a different strategy: post-training.

By using millions of data points from real-world user interactions—what industry insiders call "last-mile" data—companies are refining adequate open-weights models into elite, task-specific tools. Intercom recently debuted its new model, Apex, which the company claims is objectively faster, cheaper, and more accurate for customer support than the industry-leading models, including GPT-5.4 and Opus 4.5.

The story isn't that Apex beat Frontier models. It's that domain-specific post-training closed the gap this fast. Any vertical SaaS with enough labeled interaction data is sitting on an untapped fine-tuning asset. The infrastructure moat is eroding faster than most realize. — Beafog, Industry Analyst

Challenging the Frontier Labs

This trend places companies like Cursor and Decagon at the forefront of a new operational model. Cursor, an AI-powered code editor, recently released its Composer 2 model. While it sparked controversy for being based on Kimmy K2.5, the model’s performance on coding benchmarks caught the attention of developers who previously relied exclusively on proprietary frontier models.

The economic implications for traditional "Frontier Labs" (such as OpenAI and Anthropic) are significant. As organizations find it more cost-effective to host their own fine-tuned models, the reliance on high-cost API access is expected to decline. Clem Dang of Hugging Face noted the scale of this shift, stating that companies from Airbnb to Notion are increasingly finding it "better, cheaper, and faster" to train open models internally.

What Lies Ahead

While the rise of vertical models suggests a move away from "one-size-fits-all" AI, it does not imply that every company with a database will successfully launch its own model. The barrier to entry remains high, requiring specialized expertise in reinforcement learning and data orchestration. However, the success of Apex and Composer 2 proves that the "speciation" of intelligence—as predicted by researcher Andre Karpathy—is underway.

Moving forward, the industry should expect increased M&A activity as foundation model labs seek to acquire companies that possess the unique "evals" and interaction data necessary to maintain their edge. As this "lab loop" continues, the competitive advantage will likely transition from the sheer size of a general model to the quality of the proprietary feedback loops embedded within the application layer.

![Warning TRUMP: This Is Very Dangerous [It’s Starting]](/content/images/size/w1304/format/webp/2026/04/trump-middle-east-market-volatility-oil-prices.jpg)