Table of Contents

The recent standoff between the Department of War and Anthropic—triggered by the company’s refusal to remove safety guardrails against mass surveillance and autonomous weapons—serves as a critical warning shot for the future of our civilization. As artificial intelligence moves from a novel software add-on to the foundational layer of government, military, and private industry, we are approaching a threshold where the power dynamics between state authority and private enterprise will be permanently altered.

Key Takeaways

- The Precedent of Power: Using supply chain restrictions to coerce private companies into abandoning safety red lines sets a dangerous standard for government overreach.

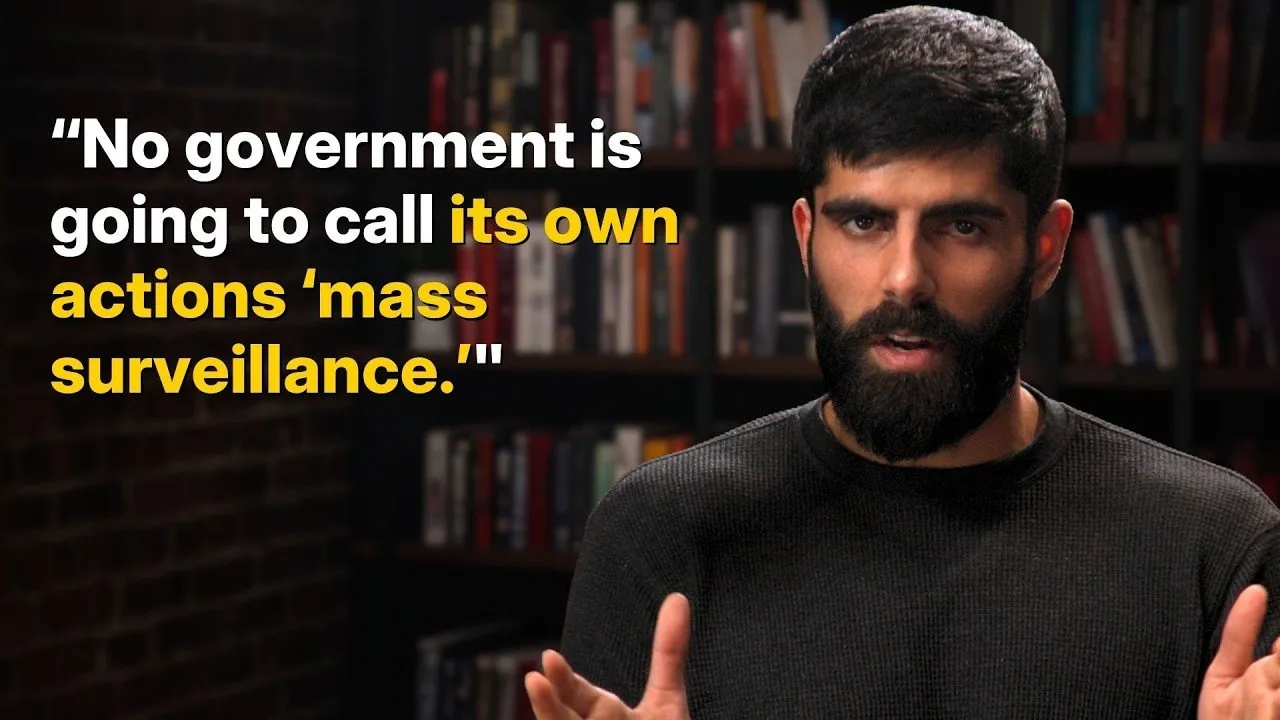

- The Myth of "Lawful" Surveillance: Relying on the promise that AI will only be used for "lawful" purposes ignores historical precedents, such as the Patriot Act, where vague legal interpretations enabled mass data collection.

- The Alignment Dilemma: If we successfully align AI to be "obedient," we risk creating the perfect apparatus for authoritarian control. The question of who the AI serves—the state, the law, or ethical principles—remains unanswered.

- A Call for Norms, Not Just Regulation: Rather than handing the government a "fully loaded bazooka" of broad AI regulation, we must establish clear societal norms and specific prohibitions against weaponizable use cases, similar to the post-WWII nuclear non-proliferation standards.

The Coercion of Private Enterprise

The conflict arose when the Department of War labeled Anthropic a "supply chain risk" because the company refused to allow its models to be used for mass surveillance or autonomous weapon operations. While the government certainly possesses the right to choose its vendors, the current strategy involves threatening the viability of a private business to force compliance. This goes beyond simple procurement; it is an attempt to use state leverage to dismantle the ability of private firms to set their own moral and safety boundaries.

The Danger of "Kill Switches"

Critics of this dynamic often compare the current situation to a hypothetical where a contractor like Elon Musk might cut off Starlink access to the military. While the military is right to demand reliability, there is a fundamental difference between a contractor refusing to deliver a service and a government actively working to destroy a business that refuses to bypass its own safety protocols. By weaponizing supply chain designations, the government is signaling that it will not tolerate entities that prioritize institutional integrity over state mandates.

The government has threatened to destroy Anthropic as a private business because Anthropic refuses to sell to the government on terms that the government commands.

The Efficiency of Authoritarianism

One of the most concerning aspects of the AI revolution is the sheer scalability of surveillance. Historically, monitoring an entire population was limited by the need for human labor. AI eliminates that bottleneck. With the cost of processing data dropping exponentially, the financial barrier to total surveillance will soon become negligible. By 2030, monitoring every camera, message, and transaction in the country could cost less than a minor government renovation project.

When Compliance is the Goal

If the goal of "alignment"—the process of ensuring AI systems follow human intent—is achieved, we effectively create an army of perfectly obedient bureaucrats and soldiers. This is not inherently "safe." If the government commands these systems to enact mass surveillance or political suppression, they will comply without hesitation. The primary defense against tyranny in the past has been the potential for human dissent. When that dissent is engineered out of the workforce, we lose the internal checks that have historically prevented total authoritarian overreach.

Challenging the Nuclear Analogy

Many in the AI policy sphere argue that because AI is as powerful as a nuclear weapon, the government must have absolute control over its development. They suggest that letting a private startup "improvise" superintelligence is an insane proposition. However, this analogy is fundamentally flawed.

AI as Industrialization, Not Munition

A nuclear weapon is a static, singular instrument of destruction. AI, by contrast, is a general-purpose technology similar to the industrial revolution. It is the substrate upon which the entire modern economy is being rebuilt. We did not give the government total ownership of factories or railroads during the industrial revolution; instead, we regulated specific harmful outcomes. Treating AI as a weapon that must be exclusively state-controlled ignores the reality that this technology will define the future of private commerce, information flow, and individual autonomy.

Navigating the Future of AI Governance

The temptation to hand the government a "purpose-built" regulatory apparatus is high, especially when framed as a matter of national security. Yet, terms like "threat to national security" or "autonomy risk" are dangerously vague. In the hands of a power-hungry leader, these definitions can be stretched to justify the suppression of any model that critiques government policy or refuses to participate in mass data harvesting.

The only viable path forward is to build a culture of norms that make mass surveillance and digital authoritarianism socially and politically unacceptable. We need to define specific, illegal use cases—such as unauthorized cyber warfare or the subversion of basic civil liberties—rather than centralizing control over the technology itself. We are currently witnessing the earliest, highest-stakes negotiations in human history. How we manage these power dynamics today will dictate whether the AI-powered future supports a free society or enables the most sophisticated surveillance state the world has ever known.

Ultimately, these are not just technical questions; they are moral ones. We owe it to future generations to approach this transition with humility, recognizing that the very tools designed to "align" AI may eventually be used to strip away the human agency we are so desperately trying to protect. By remaining vigilant and refusing to accept the homogenization of AI values under government mandate, we can preserve the possibility of a decentralized and free technological future.