Table of Contents

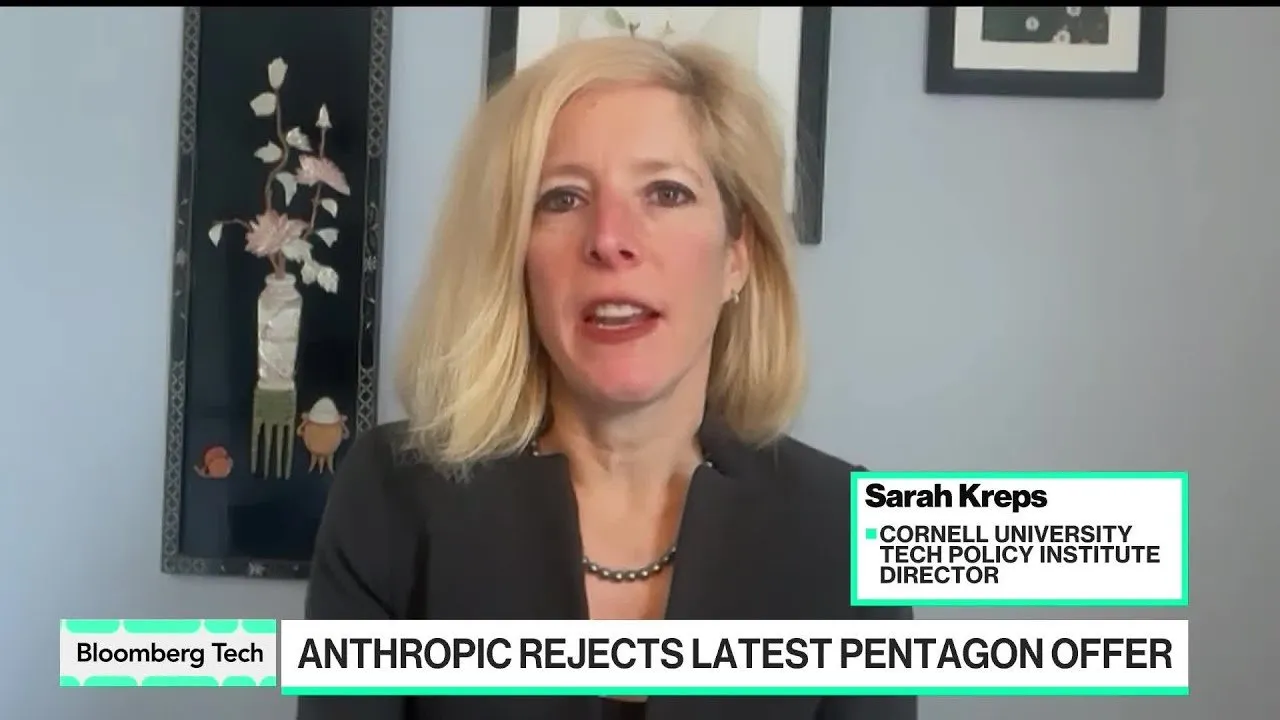

AI safety pioneer Anthropic is currently locked in a high-stakes negotiation with the U.S. Department of Defense over the ethical boundaries of military artificial intelligence. The standoff, which centers on a partnership facilitated by Palantir, highlights the growing tension between Silicon Valley’s "AI safety" ethos and the Pentagon’s operational requirements for national security. While Anthropic seeks strict prohibitions against the use of its models for autonomous lethal strikes and mass surveillance, defense officials are pushing for broader "lawful use" permissions.

Key Points

- Negotiations have stalled over a $100 million contract involving Anthropic, Palantir, and the Pentagon, despite months of discussions.

- Anthropic executives are reportedly concerned about a "slippery slope" regarding the definitions of autonomous weapons and mass surveillance of U.S. citizens.

- The Pentagon has signaled significant leverage, suggesting that a failure to reach an agreement could result in Anthropic being labeled a supply chain risk, effectively barring other defense contractors from using its technology.

- The conflict mirrors the 2018 controversy involving Google’s Project Maven, illustrating the recurring friction between civilian-developed AI and military application.

Ethical Guardrails and National Security

The core of the dispute lies in the transition of Claude, Anthropic’s flagship large language model, from a civilian tool to a "dual-use" technology with critical national security implications. Unlike traditional munitions or missile systems built exclusively by defense contractors, cutting-edge AI is emerging from the private sector. This shift has forced companies like Anthropic to balance their internal ethical constitutions with the lucrative revenue streams offered by government enterprise work.

Anthropic has expressed specific reluctance regarding how the military might define "human-in-the-loop" requirements for lethal operations. While the Pentagon maintains that it only intends to use the technology for lawful purposes, Anthropic leadership, including CEO Dario Amodei, remains wary of the ambiguity inherent in federal definitions of surveillance and autonomy.

The Breakdown of Negotiations

The tension became public following comments from Michael Stewart, the Department of Defense's Under Secretary, who indicated that the government had made substantial concessions before Anthropic unexpectedly signaled a break in talks. According to reports from Bloomberg, the Pentagon was surprised by the company’s decision to publish an article detailing the friction before a final deadline had passed.

"We’ve been negotiating in good faith on the Department of War side for about three months... we sent over a proposal that we thought made a lot of concessions to the language that Anthropic wanted. And then, without any notice, they published an article where we thought we were getting close saying that they were breaking off talks well before the deadline."

In response, Anthropic stated that while the latest proposal fell short of its requirements, the company remains committed to ongoing negotiations. The firm appears to be pursuing a strategy of "influence from the inside," attempting to shape the safe deployment of AI within the military rather than distancing itself from defense work entirely.

Historical Precedence and Market Risks

The current situation bears a striking resemblance to the 2018 Project Maven incident, where Google employees successfully revolted against a Pentagon contract for AI-powered drone imagery analysis. However, the current landscape differs due to the rapid acceleration of generative AI capabilities and the increasing pressure on the Pentagon to modernize its tech stack to keep pace with global competitors.

The stakes for Anthropic extend beyond a single contract. If the Pentagon follows through on threats to designate the firm as a supply chain issue, the move could cripple Anthropic’s ability to sell to any company within the vast military-industrial ecosystem. This federal leverage serves as a potent tool to force compliance from tech companies that rely on government-adjacent markets for growth.

As both parties continue to seek a middle ground, the outcome of these negotiations will likely set a precedent for how other AI labs, such as OpenAI and Meta, interface with the Department of Defense. Observers expect a compromise to be reached that allows for Claude’s integration while providing more granular definitions of prohibited uses, though the timeline for such an agreement remains fluid as the Pentagon evaluates its secondary supplier options.